Snapchat Under Fire: Eu Child Safety Probe Targets Popular App

EU Takes Aim at Snapchat's Child Safety Practices

Snapchat is in the hot seat as the European Commission launches a formal investigation into its child safety protocols. The probe, which falls under the rigorous standards of the Digital Services Act (DSA), questions whether Snapchat meets the high-level safety, privacy, and security obligations required for minors.

This move highlights the EU's commitment to online safety, particularly for younger users. Under the DSA, platforms can face penalties of up to 6% of their global annual turnover if found non-compliant.

Age Assurance Under the Microscope

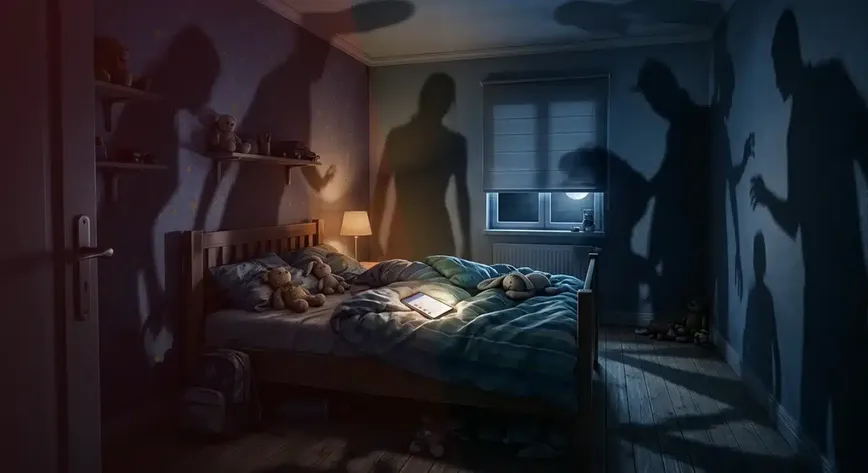

Central to the investigation is how Snapchat ensures age compliance. The app claims that users must be at least 13 to join, but the Commission suspects that relying solely on self-declaration is inadequate. Concerns are mounting that this method doesn't effectively block under-13s or verify ages for those under 17, compromising age-appropriate user experiences.

“From grooming and exposure to illegal products to account settings that undermine minors’ safety, Snapchat appears to have overlooked that the Digital Services Act demands high safety standards for all users. With this investigation, we will closely look into their compliance with our legislation,” said Henna Virkkunen, Executive Vice-President for Tech Sovereignty, Security and Democracy.

The inquiry also raises alarms about the accessibility of tools for reporting underage users, as well as the risks of exposure to harmful content and inappropriate contact from users misrepresenting their age.

Privacy and Content Moderation Concerns

The Commission is also scrutinizing Snapchat's default privacy settings. It argues that the current settings might not offer adequate protection for minors, with features like "Find Friends" and default-enabled push notifications under review.

On top of privacy issues, Snapchat is under the microscope for its handling of illegal content. The Commission questions whether the app is effectively curbing the spread of harmful material, including drugs and age-restricted products. Reporting mechanisms for illegal content are also being evaluated for user-friendliness and accessibility.

Next Steps in the Investigation

Moving forward, the Commission will dive deeper into its investigation, gathering more evidence and potentially conducting interviews or inspections. These proceedings could lead to significant actions, like interim measures or non-compliance rulings, to ensure Snapchat steps up its safety game for minors.

This investigation is part of the EU's broader push under the DSA to enforce stricter online child protection across platforms, emphasizing that platforms must exceed mere self-declaration for age verification and prioritize the highest level of safety by default.

Wider Implications for Adult Content Platforms

The Commission is broadening its scope, targeting adult content sites like Pornhub and XNXX, among others, for similar shortcomings in age verification and protection measures. The crackdown reflects the EU's firm stance that online platforms must implement robust, privacy-conscious measures to keep minors safe from inappropriate content.

If found in breach, these platforms could face tough penalties, reinforcing the EU's regulatory trajectory under the DSA, which mandates heightened responsibility from platforms to shield minors online.